As artificial intelligence (AI) becomes more integrated into everyday work, managers have to navigate not only how employees use it, but how they use it themselves. AI tools can boost productivity, but they also reshape what trust, accountability and expectations look like, so leaders need new norms. Viewing these tools as “copilots” can help shift perspective when used to improve, rather than replace, thinking, judgment and communication, using the term as an analogy for AI tools that support the work much like a copilot assists a pilot without taking over the controls.

A Monday Scenario: What Are We Rewarding Now?

Consider this scenario: It’s Monday morning and a manager opens an employee’s project update that sounds like it was written by a seasoned consultant with crisp bullet points, clear takeaways and not a typo in sight. The employee turned it around in 20 minutes with help from an AI copilot. The manager is impressed and forwards it up the chain, then hesitates. Why?

Impressive, but what are we rewarding now? The thinking process or the tool? Is that a problem? And what becomes the new baseline — this level of polish, every time, on the same deadline?

How AI Can Change Performance and Accountability

The reality is AI copilots aren’t just accelerating tasks; they are changing how managers judge performance and accountability. The questions loom: should we look at how work gets done and who owns it? Without clear, transparent expectations for ethical AI use, trust and accountability can quickly erode.

What’s Changing in the Manager–Employee Relationship?

The use of AI can reduce visibility into the work process and shift expectations for speed and quality. This can become problematic because it can create gaps between employees with different levels of access or skill. As with any technology, skill and level of comfort vary greatly, leaving some feeling left out, or worse, left behind.

In the world of AI, prompt engineering is key. Some employees have stronger prompting skills or even access to better tools, leading to inequities that can be difficult to overcome. Employees lacking in skill or access may feel increased pressure from new ideals, “If AI can do it, why can’t you?” leading to unrealistic expectations and workloads. As noted by Marimon et al. (2025), generative AI can influence employee engagement overall, if it’s seen as a useful aid that improves quality and speed, engagement may rise, but if it feels unreliable or threatening and adds extra effort, work becomes tedious and can increase stress and burnout.(See disclaimer 1)

On the flip side, those with advanced AI skills may feel added scrutiny when managers struggle to assess writing, analysis or accuracy in their work when AI contributes. When prompts are well written and precise, so too is the AI output.

So, how can managers reward critical thinking and quality output while ensuring accuracy, as well as bringing less-skilled employees up to speed?

The Biggest People Management Risks: An Organizational Behavior Lens

These concerns highlight potential consequences that can surface when AI use is unclear, uneven or treated as “cheating.” This is when a manager’s people skills come into play, instilling a sense of trust and psychological safety among team members. Those with higher AI skills may experience fear of being judged by coworkers or supervisors for using AI vs. fear of being “caught” by not disclosing it.

Employees with uneven access or differing comfort levels may feel a lack of equity and inclusion in the workplace. Fairness, motivation, accountability and integrity are equally important when it comes to meeting employees where they are in their AI use.

Organizationally, managers are responsible for ensuring work quality and accuracy. Questions about who owns AI-related errors, bias, tone or compliance issues can cause confusion, while over-reliance can weaken critical thinking and domain mastery. From an organizational perspective, learning and development can play an important role in providing clarity and direction across the board.

Ethical AI Use as a Management Responsibility

Given the risks vs. rewards, understanding the impact of AI use in organizations is of the utmost importance. Gupta et al. (2026), explain that generative AI offers major benefits, supporting education, training and content creation, but it also raises serious ethical risks, including:(See disclaimer 2 )

Establishing clear ground rules and practical guidelines is necessary to build a strong foundation for the ethical use of AI in the workplace.

This can help employees understand, in practical terms, processes that emphasize:

Ethical Use of AI

What do we mean when we talk about “ethical” use of AI? Let’s start with transparency. Employees should be asked to identify when and how AI was used by disclosing what was generated by AI vs. what was authored by humans. This can build trust among team members with varying AI skills by clearly demonstrating the source of deliverables.

Privacy and Confidentiality

Privacy and confidentiality considerations are especially important regarding sensitive client and employee information. Employees must be aware of exactly what can and cannot be entered into AI copilots. While many organizations have internal AI systems that alleviate these concerns, the use of OpenAI runs the risk of unintentionally sharing sensitive data.

Accuracy, Fact Checking and Verification

Additionally, clear expectations should be established for fact-checking, citations and escalation to ensure accuracy and verification of AI-generated output. The role of a manager includes responsibility for employees' final work product, but a shared approach can build trust through mutual accountability. The goal is to clearly communicate expectations to gain employee confidence in verifying authenticity and accuracy.

Fairness and Bias Awareness

Fairness and bias awareness are additional aspects of this verification process that must be addressed. Large language models (LLMs) are AI systems trained on massive amounts of text to predict and generate output, allowing them to summarize, draft and answer questions. Given that they are continually “learning,” they are not immune to error, so checking for biased language or recommendations is crucial. For example, an AI prompt seeking quotes on motivation may result in content that focuses solely on quotes by men, excluding valuable contributions by women.

Human Judgment and Accountability

Ultimately, human judgment remains the last and best line of defense. Accountability should be continually reiterated, with the final responsibility remaining on employees and managers for decisions and work. A collaborative environment can foster a supportive, growth-oriented mindset toward ethical, responsible AI use.

A Practical Playbook: Setting Norms and Ground Rules

Once expectations are established, managers can craft a short set of implementable team rules, including approved tools, disclosure expectations, verification steps and data restrictions, that make AI use consistent, transparent and low risk. This can help provide a level of protection for all involved.

For starters, creating a “copilot working agreement” to establish a set of norms and standardization can include a list of acceptable AI tools and approved use cases. Data boundaries should be clearly articulated so employees know what must never be entered into AI copilots. A set of disclosure rules, such as an “AI-assisted” label for deliverables, lets others know when AI was used and to what extent. Implementing controls such as a verification checklist or, for high-stakes outputs, peer reviews provides multiple sets of eyes as an additional guardrail.

To increase buy-in and facilitate norming, modeling transparency is paramount. Managers should always disclose their own AI use to reduce stigma and set expectations. A focus on impact versus volume helps employees at varying stages of AI expertise feel more at ease in gaining experience using copilots in their own work. For employees wishing to increase their AI skills, managers can lean into training for competence over compliance by concentrating on prompting basics, evaluation skills and how to spot bias. As AI evolves, so too will the need for continual assessment of internal practices for relevancy and accuracy.

How to Evaluate Work Fairly When AI Is Involved

Once clear expectations and practices have been established, it is important to ensure work is evaluated fairly and equitably, regardless of its source. Managers should use performance measures that show how an employee made decisions, such as their reasoning, the quality of their sources and how they checked accuracy. This way, employees are rewarded for good thinking and accountability, not just producing a lot of output. Critical thinking is also enhanced by encouraging employees not only to think through their processes but also to articulate them in a meaningful way. The integration of AI tools presents profound changes in the way employees think, decide and create, according to Oschinsky & Nguyen, 2025.(See disclaimer 3)

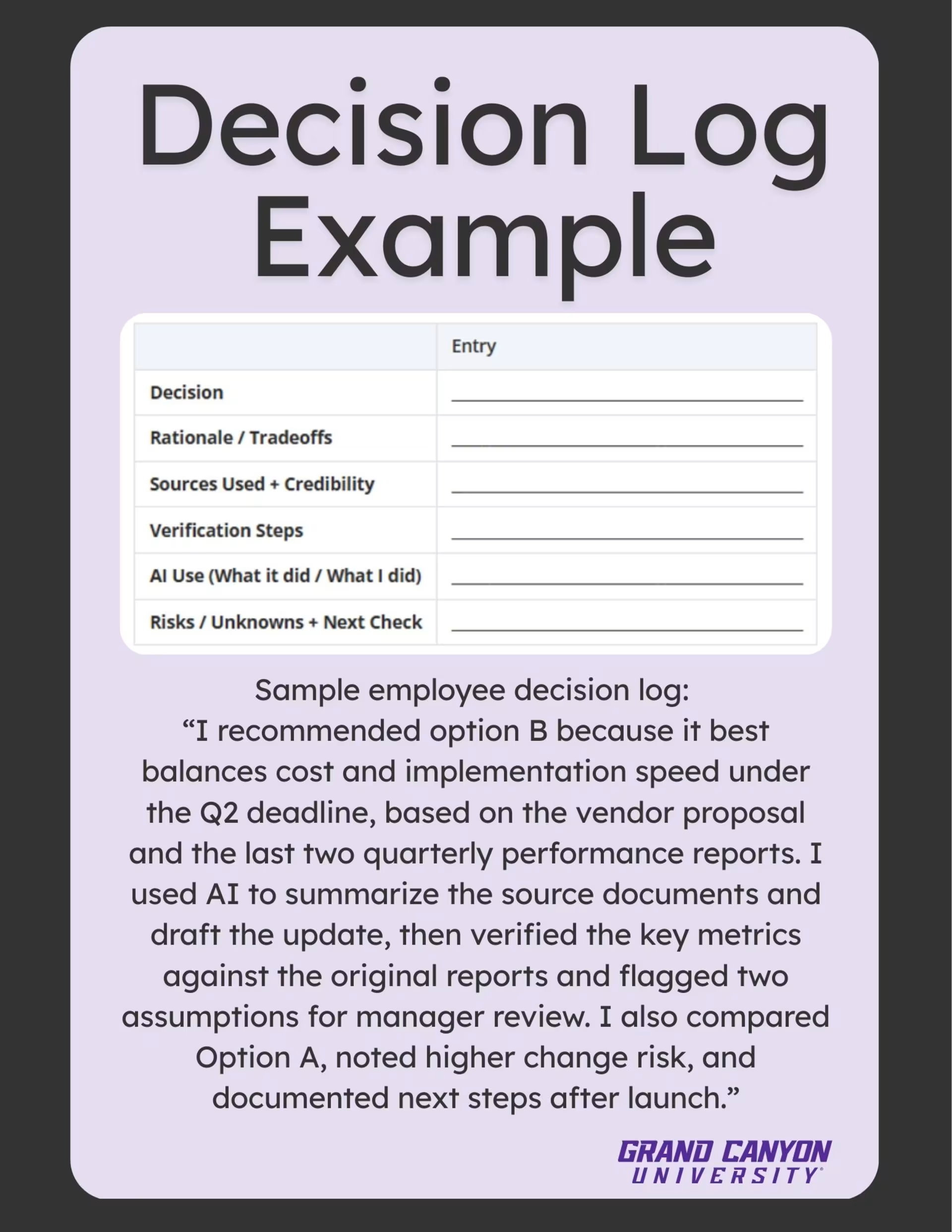

Ways to shift to more holistic assessments that reveal thinking can include short decision logs or rationale notes explaining choices and trade-offs, a joint review of source quality and verification steps, or implementing spot checks for accuracy, tone and policy alignment. It can also help distinguish between using AI to draft ideas vs. actual decision-making, which may require higher scrutiny.

Here’s an easy-to-use template for managers:

A decision log can help break down the process, allowing the employee to demonstrate how the AI copilot's output was evaluated to reach the final decision, and for the manager to have a clear picture of the outcome. In fact, this may offer managers more insight into an employee’s critical thinking process than ever before, enabling improved relationship building through collaboration. As Odiaka & Chang (2025) report, “maintaining a healthy communication channel between managers and subordinates benefits engagement, working morale and overall performance.”(See disclaimer 4)

The New Leadership Skill Is “AI-Enabled Trust”

By now it’s clear: AI is here to stay. Adapting to this new reality is a must for organizations and especially for managers responsible for its use among employees. Sustainable AI gains come from transparent practices and ethical safeguards that protect people, performance and organizational culture. While productivity gains have always been a focus, a shift to AI copilot use calls for clear team norms and expectations, opportunities for learning and development, holistic performance evaluation that encourages employee input and shared accountability to foster mutual AI trust and transparency among team members.

Leaders who get this right will treat AI like any other powerful tool by setting boundaries, training people and checking work. Starting small can go a long way by piloting a few approved tools, such as:

Revisiting these norms often as tools evolve is crucial to keep up. Most importantly, keep conversations human by asking employees:

When managers model AI trust and transparency, encouragement and thoughtful verification, teams learn that speed matters, but trust matters more.

Program Spotlight: MSOL at GCU

If you are seeking the skills and foundational knowledge that are readily transferable to a broad spectrum of career fields in management, Grand Canyon University’s Colangelo College of Business offers the Master of Science in Leadership. The program focuses on leading people through change, shaping ethical culture, improving communication and building high-trust teams, exactly the management capabilities needed when AI alters workflows, decision accountability and expectations.

Coursework in leadership theory and innovation, organizational development and strategic influence can help managers set transparent AI use standards, protect confidentiality and align technology with mission and values. These skills are especially important as AI reshapes manager–employee dynamics, making it essential for leaders to communicate clearly, build trust and guide teams through new expectations. It’s ideal for managers who must lead responsibly every day.

See how managers can use copilots to encourage transparency, empower employees and build high-trust teams.